Recently, a handful of customers began reporting occurrences of two similar performance issues when playtesting their iOS games: Some recorded playtest videos were showing a lower frame rate than they should have had, and some players were reporting a little extra lag during their playtesting sessions.

Now, we built PlaytestCloud to test the newest 3D games with a minimal performance impact, and for years now, that’s how it’s been. That’s how we want to keep it.

So even though these performance issues weren't too severe, they had our full attention.

Faster devices, slower performance?

What surprised us the most was that the issues were most prevalent on the iPhone 7 + 7 Plus, iPhone 8 + 8 Plus, and the iPad Pro. Naturally, we assumed that the recording would be faster on these devices due to the faster hardware, but that’s not at all what we were seeing.

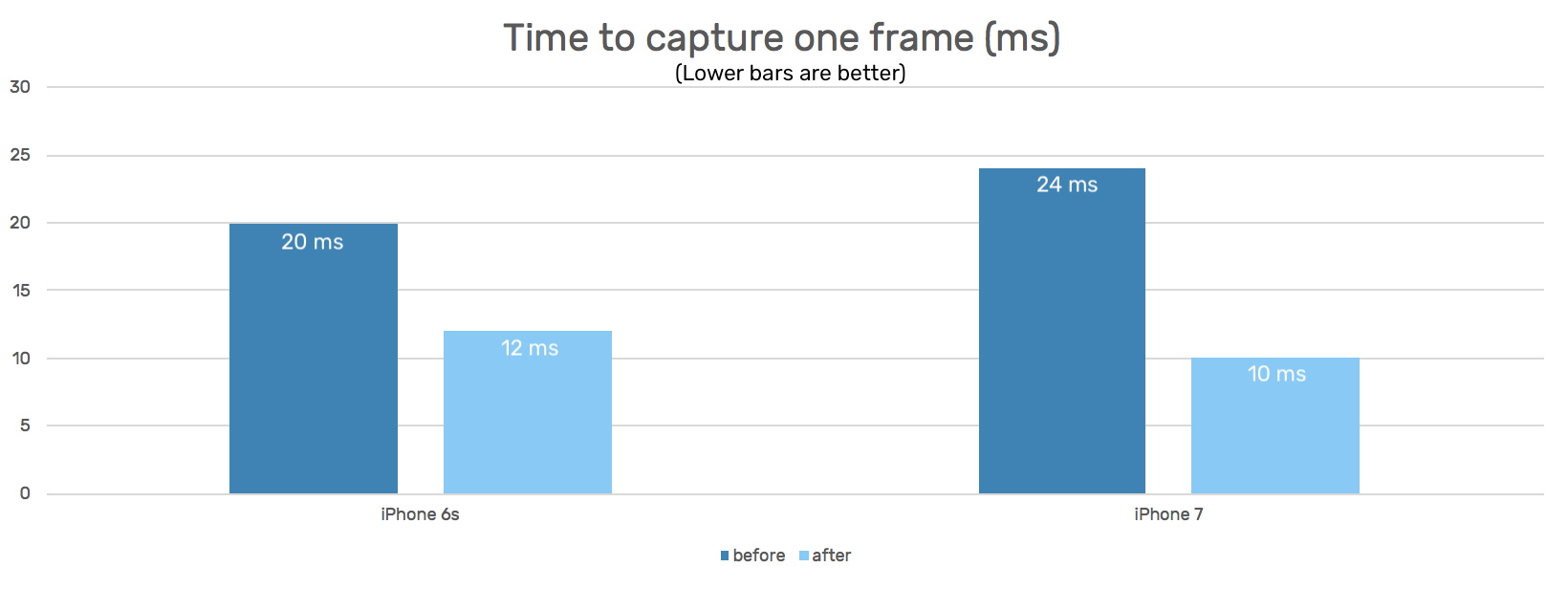

What follows is a highly technical blog post. It goes into detail about what happened, how we fixed it, and how the fix led to an overall improvement in iOS screen recording, with speed increases of 2.4x on iPhone 7 and later and 1.67x on earlier devices.

Note: The technical nature of this blog post might not be for everyone – and that's okay! Feel free to click on the link below to skip ahead to the wrap-up.

Click here to skip ahead to the wrap-up section

Profiling

Our first step to understanding better which parts of the screen recording were causing the slowdown was to run a CPU profiler. We tested our screen recording with a basic OpenGL app with a predictable performance profile so we could gather reliable data on its performance.

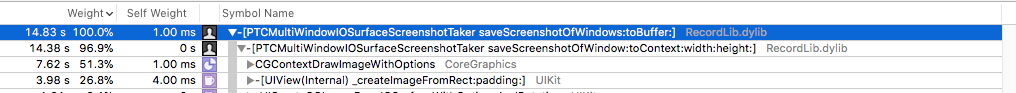

Using Apple's Instruments Time Profiler we found out that a large percentage of CPU time was spent in one single function: CGContextDrawImageWithOptions, alias CGContextDrawImage.

To understand the significance of this function, here's how the PlaytestCloud wrapper captures a frame on iOS:

// Open a context on a CVPixelBuffer for drawing into:

CGContextRef context = CGBitmapContextCreate(...);

// iterate over all visible windows

for (UIWindow *window in visibleWindows) {

// 1. Render each window into an IOSurface

IOSurfaceRef screenshot = [window _createImageFromRect:window.bounds padding:UIEdgeInsetsZero];

// [...]

// 2. Draw each IOSurface into the CVPixelBuffer

CGImageRef cgImage = UICreateCGImageFromIOSurface(screenshot);

CGContextDrawImage(context, rect, cgImage);

}

// Pass the CVPixelBuffer to the h264 encoder

// ..

CGContextDrawImage draws one image onto another one, doing alpha blending and pixel format conversion in the process, if necessary. We found it interesting that the largest part of our time was spent in CGContextDrawImage. What this means is that capturing the windows is relatively fast, but blending those into one final image takes some time.

Faster blending with Metal

We assume that CGContextDrawImage as part of CoreGraphics/Quartz2D is a pure CPU function in which the CPU iterates over the pixels of the source image roughly sequentially. This can be especially costly if the images are large (such as a screen capture of the iPad Pro or iPhone 8 Plus screen) and if it needs to convert from one pixel format to another. Also, the CPU isn't the best choice for image processing when the device also has a powerful GPU that is much faster at image rendering.

We decided it would be best to run this blending step on the GPU by implementing it in Apple's Metal Graphics API. Having only dabbled with Metal before, we were pleasantly surprised with how easy it was to write code that does what we previously did with CGContextDrawImage purely on the GPU.

Measuring the speedup

To quantify the speedup we achieved with our Metal-based blending, we compared the actual wall-time that passes while we capture and blend all visible windows into the output CVPixelBuffer. As Apple's Instruments only provide aggregate timings for the time spent on a function, we turned to grabbing timestamps using [NSDate date] and comparing them:

#ifdef DEBUG

NSDate *methodStart = [NSDate date];

#endif

// ... capture and blend windows

#ifdef DEBUG

NSDate *methodFinish = [NSDate date];

NSTimeInterval executionTime = [methodFinish timeIntervalSinceDate:methodStart];

NSLog(@"execution time = %f", executionTime);

#endif

Of course, this assumes that every frame capture has roughly the same execution time. Fortunately, this held true because there was little change in the work done each frame.

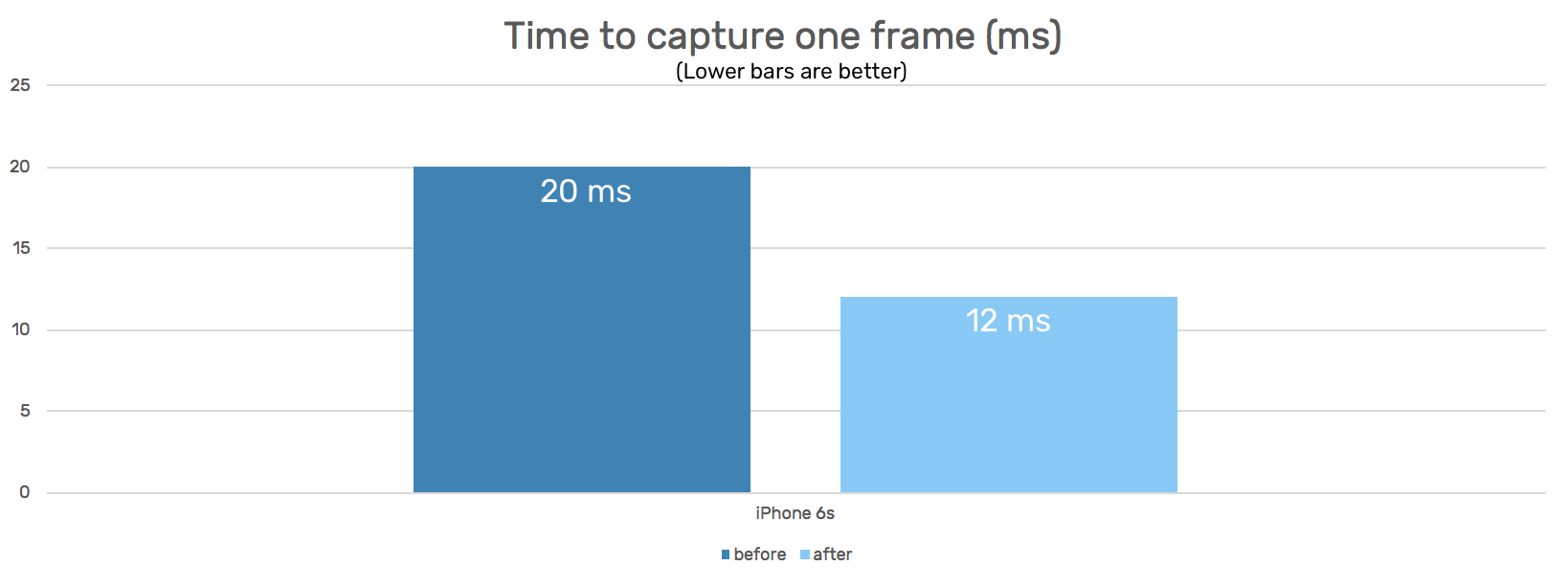

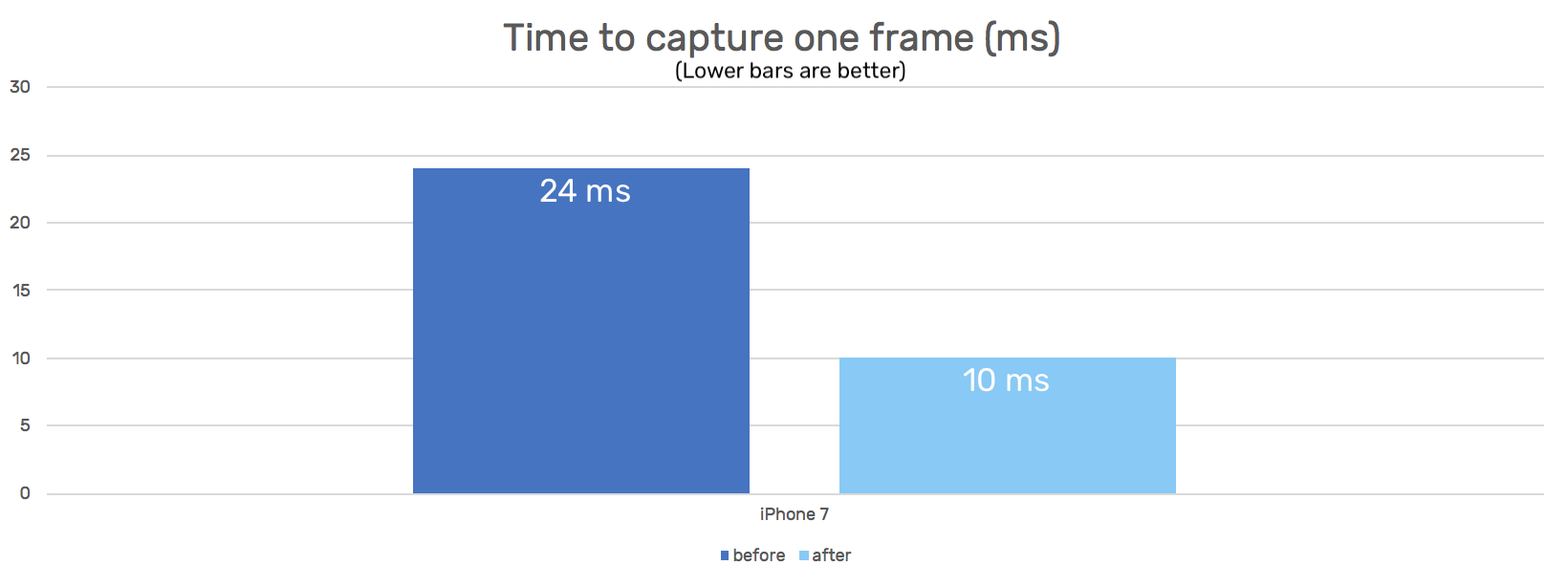

Another happy surprise we found was using Metal to blend the images achieved a 1.67x speedup on the iPhone 6s:

Wide Color

When trying out our new Metal-accelerated blending on an iPhone 7, we were shocked to find out that the resulting video came out looking like this:

This result puzzled us for quite a bit. What was going on here that would make the image look like that? Eventually, we found out that the IOSurfaces we capture with [window _createImageFromRect:window.bounds padding:UIEdgeInsetsZero]; would be in pixel format 32BGRA on iPhone 6s but in BGR10A8 on iPhone 7 – which we found to be strange. While 32BGRA is a common format with 32 bits per pixel, BGR10A8 implies 38 bits per pixel. What are the additional bits used for?

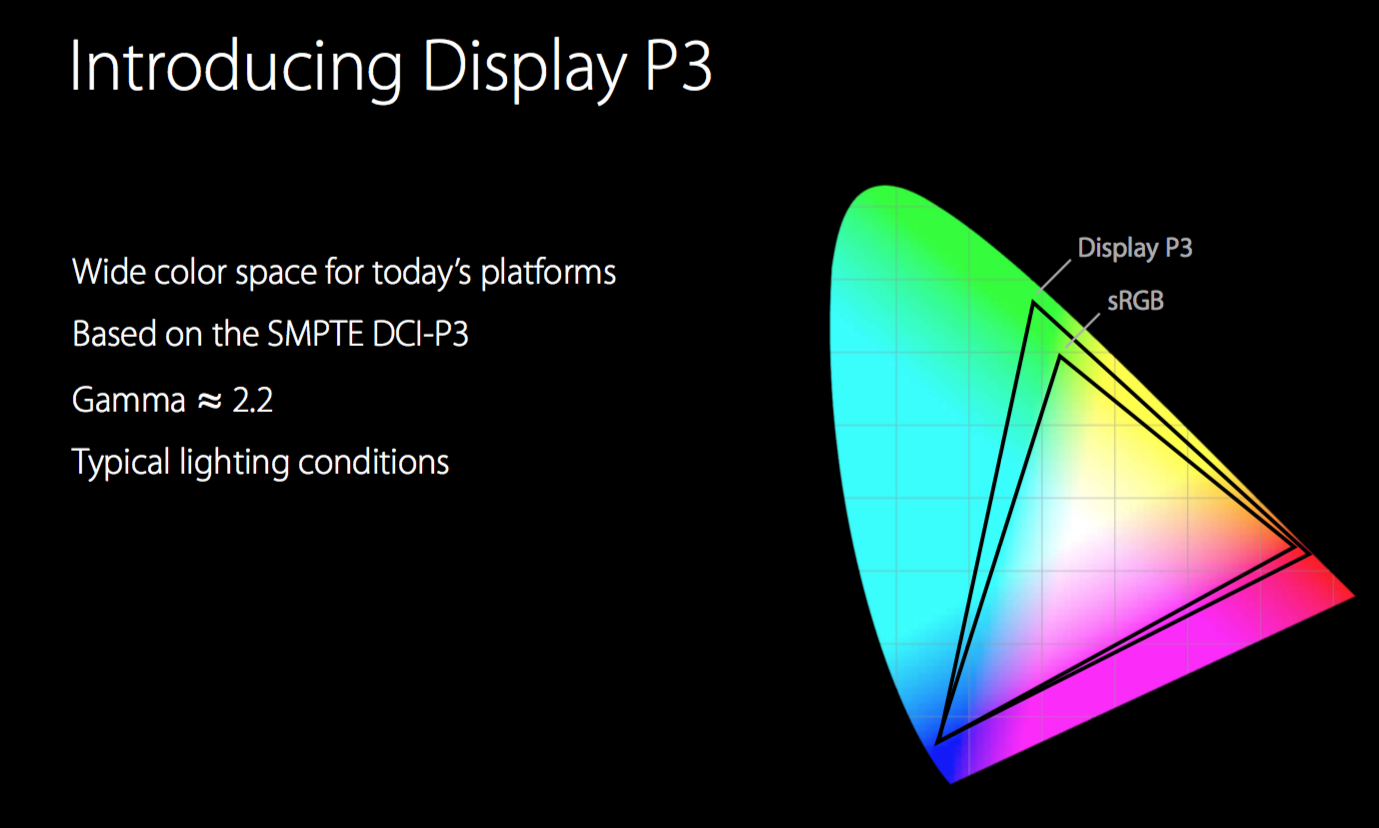

Well, with the iPhone 7 and iPad Pro, Apple introduced a new screen technology (P3) that is capable of rendering more colors than the screens of previous devices.

Display P3 is a color space within the RGB color model that represents a larger spectrum of colors than the current industry standard sRGB. Display P3 gives a 25% larger color space when compared to sRGB, and when a color space is larger than sRGB, it's informally referred to as a wide gamut color space – same as any other display type which supports a color space larger than sRGB.

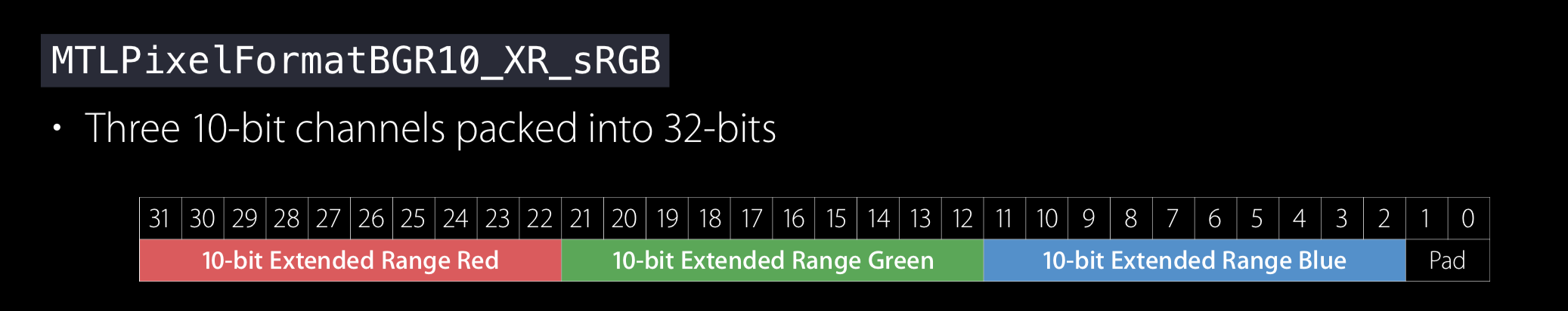

As these additional colors have to be expressed somehow, Apple also added a new color format called "Extended RGB" to iOS that extends the RGB range to values less than 0 and higher than 255. To store these additional values, you can use pixel formats such as BGR10A8, which is a planar pixel format. This means that it stores the color values for all pixels in one continuous block of memory (BGR10) and then, right after that, the alpha values in another continuous block (A8).

So the IOSurface we receive from [window _createImageFromRect:window.bounds padding:UIEdgeInsetsZero]; actually has two planes, one with 30 bits of color values per pixel, the second one with an 8-bit wide alpha value per pixel.

It dawned on us that this was the reason for the disproportionate performance hit our recording took on iPhone 7 and later devices: On these devices CGContextDrawImage had to perform an expensive pixel format conversion from the biplanar BGR10A8 input images to the 32BGRA output image in addition to alpha-blending the pixels. In fact, the iPhone 7 and later had to do more work in CGContextDrawImage than previous devices, which explains why it would take longer even though the hardware executing it is faster.

To fix our scrambled video recording, we implemented a Metal fragment shader that effectively combines the two planes, each passed to it as one separate texture:

// Fragment function

fragment half4 wideColorFragmentShader(RasterizerData in [[stage_in]], texture2d<half> colorTexture [[ texture(0) ]], texture2d<half> alphaTexture [[ texture(1) ]]) {

constexpr sampler textureSampler (mag_filter::linear, min_filter::linear);

const half4 colorSample = colorTexture.sample(textureSampler, in.textureCoordinate);

const half4 alphaSample = alphaTexture.sample(textureSampler, in.textureCoordinate);

return half4(colorSample.rgb, alphaSample.a);

}

To our delight, this led to a 2.4x performance improvement on iPhone 7 and later devices.

Bonus: Less blocking the main thread

While optimizing these parts of our screen capturing code we also managed to reduce the amount of blocking it imposes on the main thread of the app by optimizing dispatches, which should yield further performance benefits for all games while they are being recorded by the PlaytestCloud wrapper.

Wrap-up

The final result has blown us away. We are delighted by the iOS screen recording performance gains, and we are equally as delighted to have removed the performance issues we were experiencing at times on wide-color iOS devices.

On a personal level, this was a fun exercise in graphics programming. The fact that it led to an incredible increase in iOS screen recording performance is phenomenal. I couldn’t be happier with the result, and I hope that you’re all as excited about this as we are!

Ready to dive in and get some real player feedback on your game? Create an account to start a free trial!

Check out this Jelly Splash playtest video to see what's possible with PlaytestCloud.